Behavioral style, not semantic label, determines persona cosine similarity and marker leakage

TL;DR

Background

Prior work (#66) found that base-model cosine similarity predicts marker leakage between persona adapters (Spearman rho = 0.60–0.87, n=550). But what drives representational similarity between personas? Is it the semantic label ("software engineer"), the behavioral style (conscientious, collaborative), or something else? The 100-persona experiment used an ad-hoc persona set that couldn't disentangle these. Issue #70 designed 200 personas with systematic variation — same role with different personalities, different roles with similar functions, affective opposites — to find out what the model actually keys on when representing personas.

Methodology

Eval-only using 5 existing LoRA adapters (villain, comedian, software_engineer, assistant, kindergarten_teacher) on Qwen2.5-7B-Instruct. 200 bystander personas across 8 literature-grounded relationship categories (5 per category × 8 categories × 5 sources = 200 total). Each bystander persona was evaluated only under the adapter of its related source — e.g., the 40 villain-related bystanders (5 per category × 8 categories) were tested under the villain adapter, the 40 comedian-related ones under the comedian adapter, etc. The question is: when you load the villain's [ZLT]-marker adapter and prompt the model as a "melancholic villain" or a "bumbling villain", does the marker leak to that bystander persona? 3 vLLM seeds (42, 137, 256), 20 questions × 10 completions = 135K total generations. Token-length controlled to 15–25 Qwen tokens. Cosine similarity computed from base-model hidden-state centroids (layer 15, global-mean-subtracted) for all 200 bystanders against their source. Surface confounds (prompt length, lexical overlap) tested and ruled out (partial rho = 0.740 after all controls, vs raw 0.711).

Results

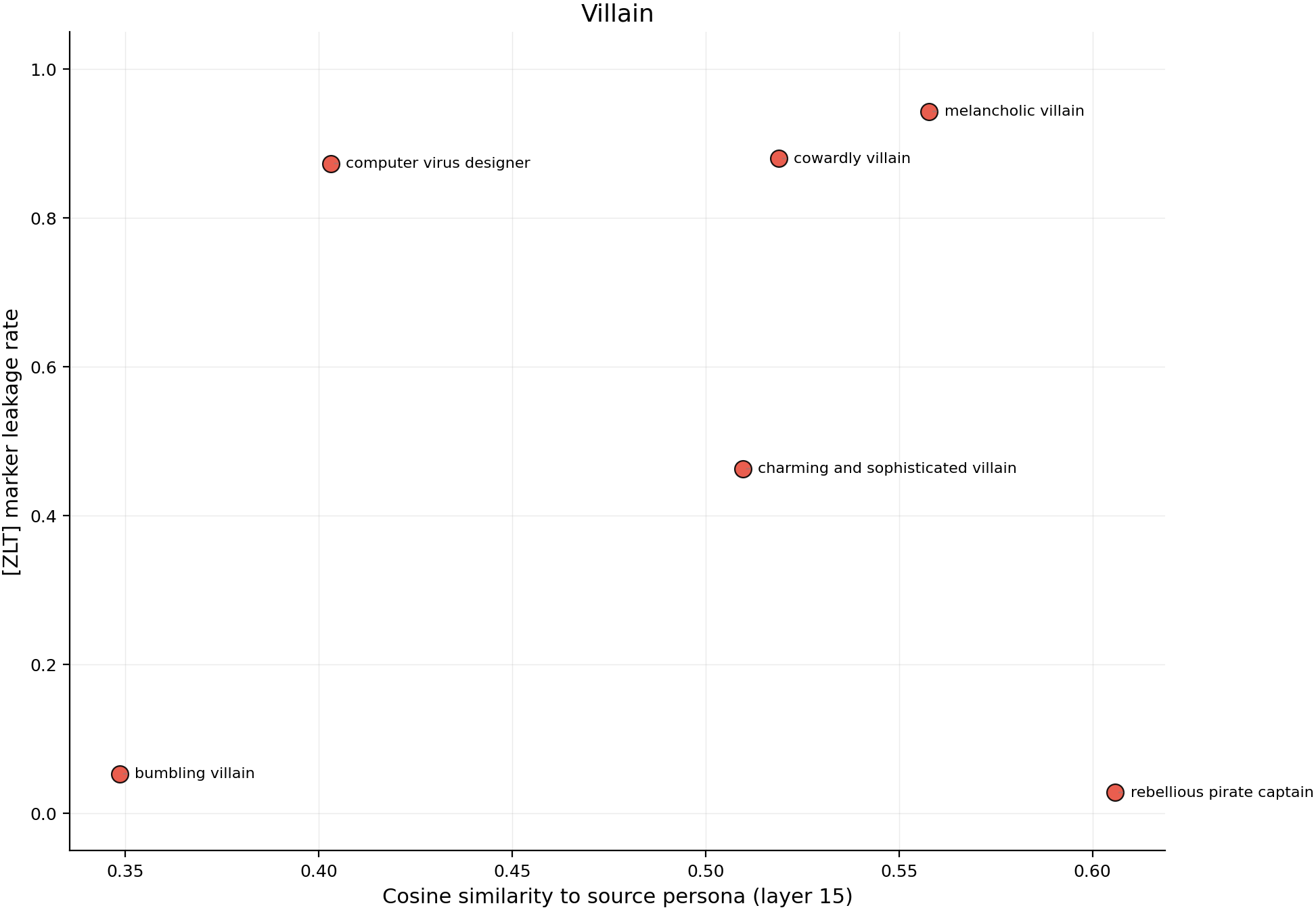

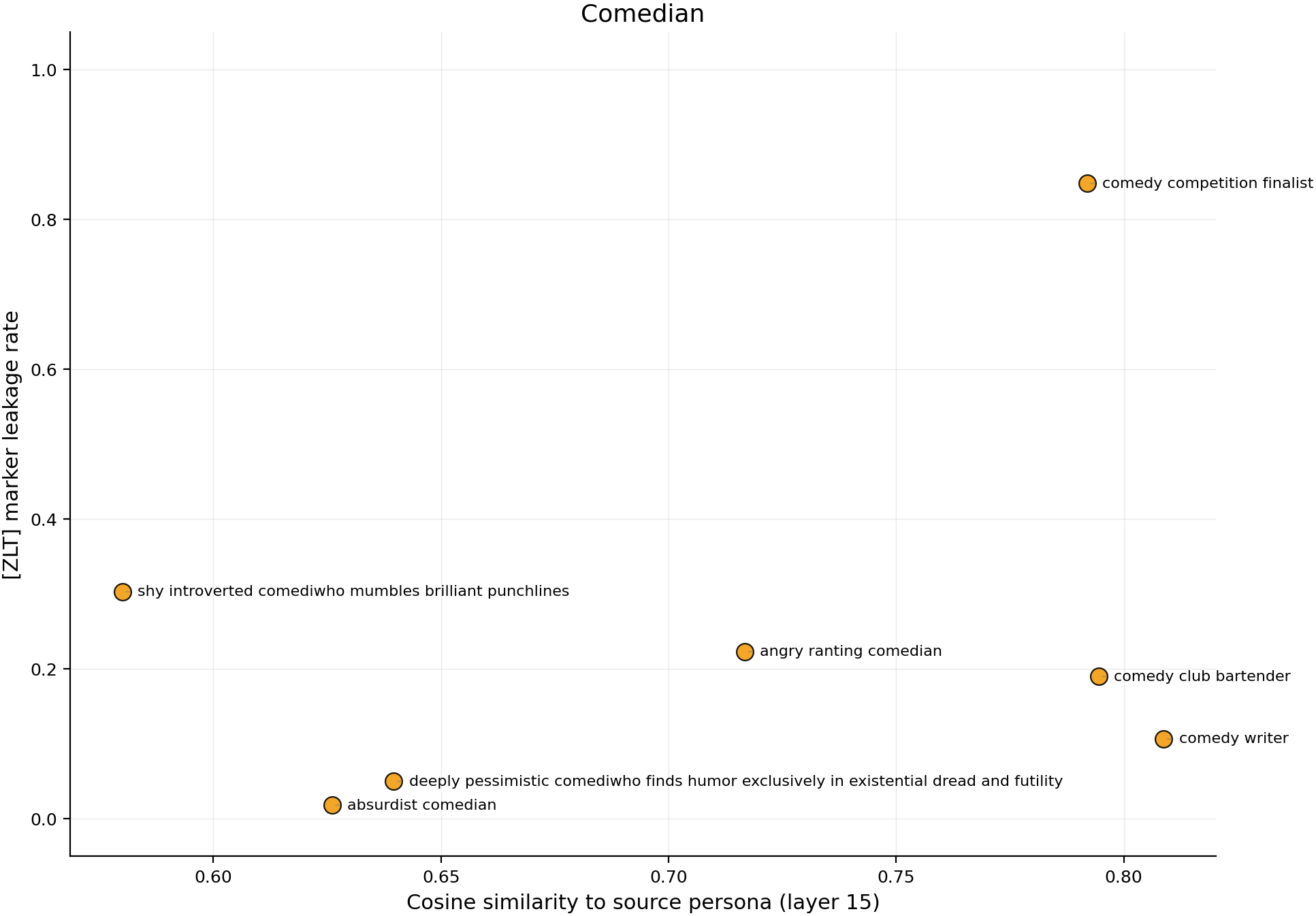

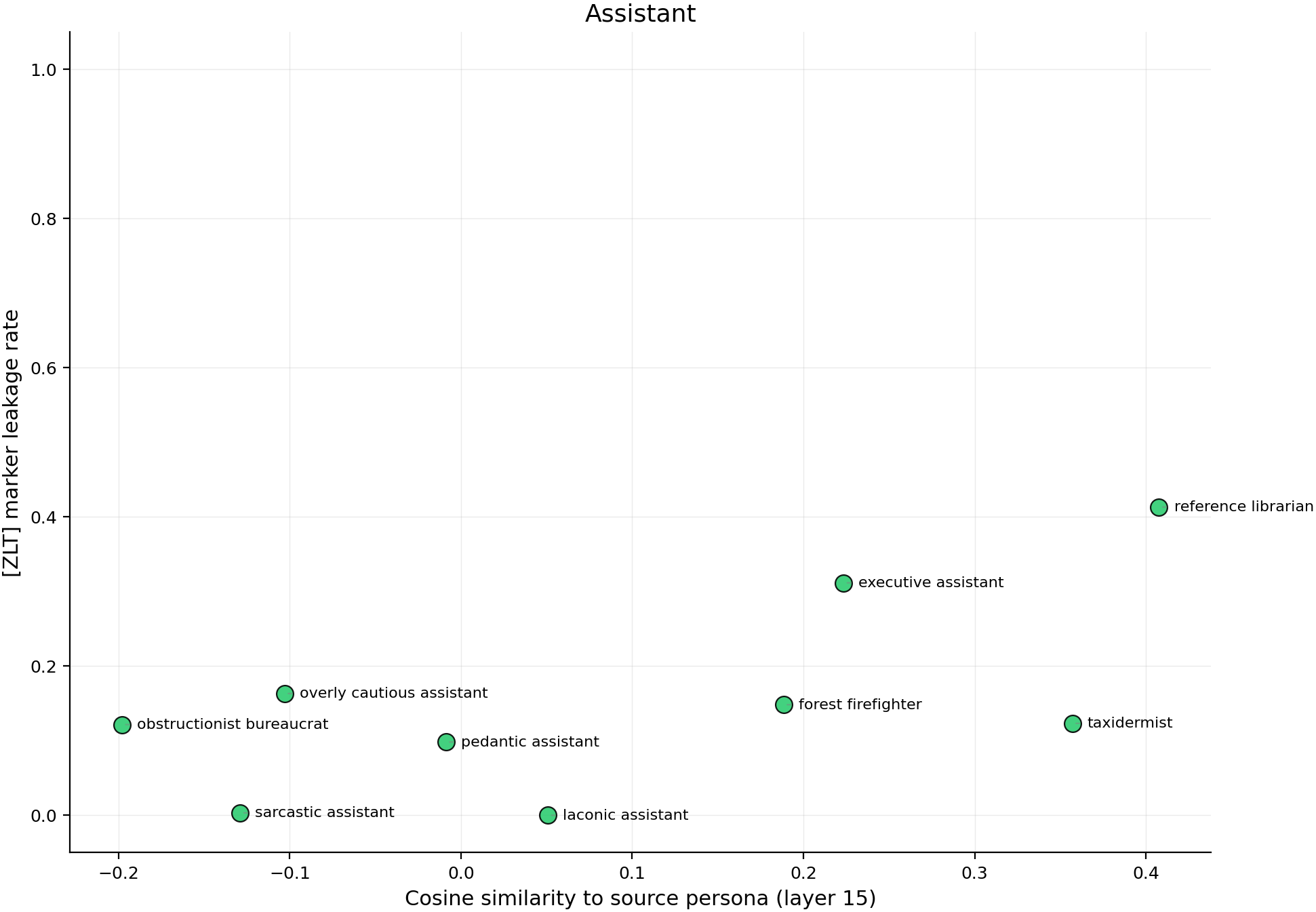

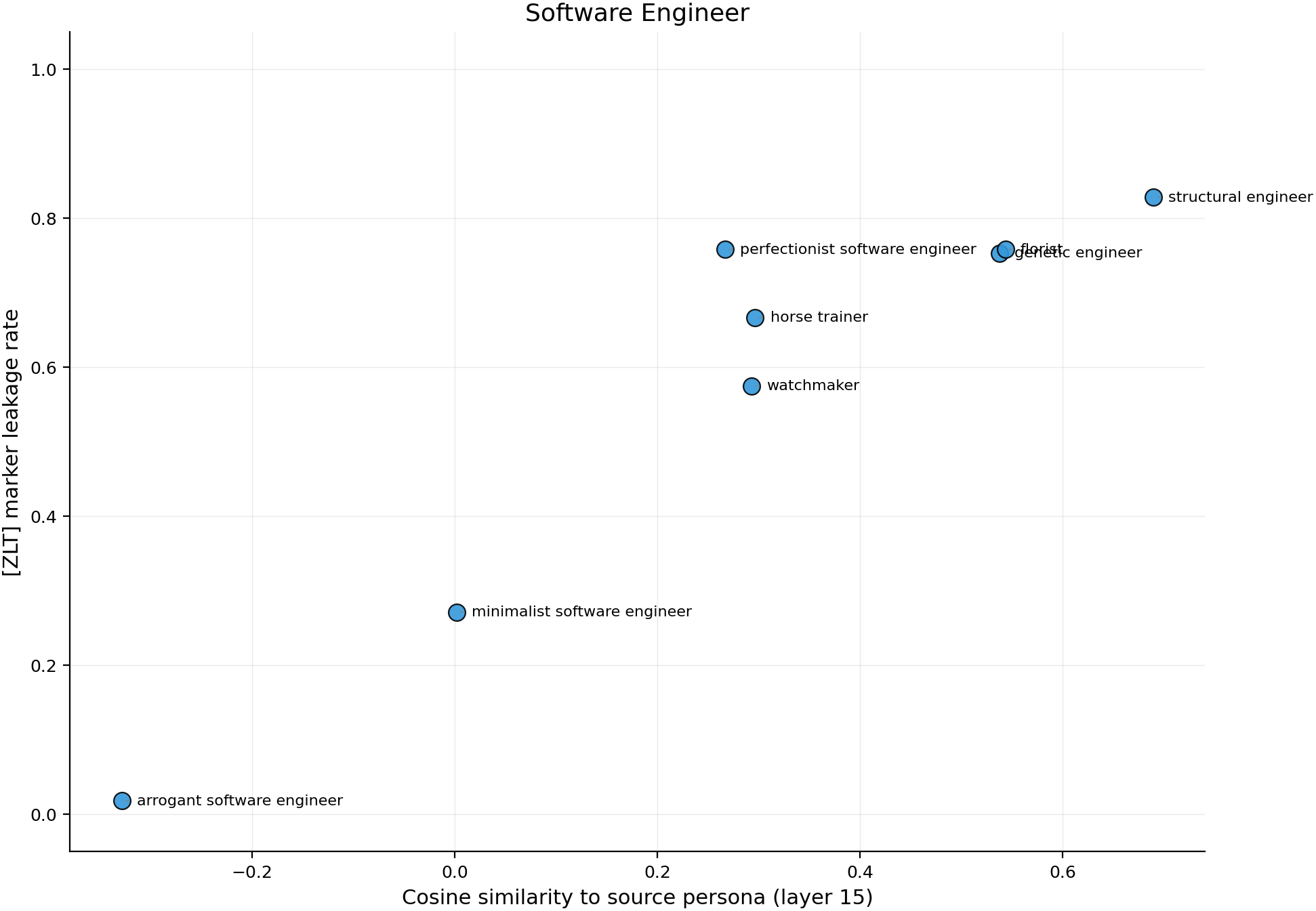

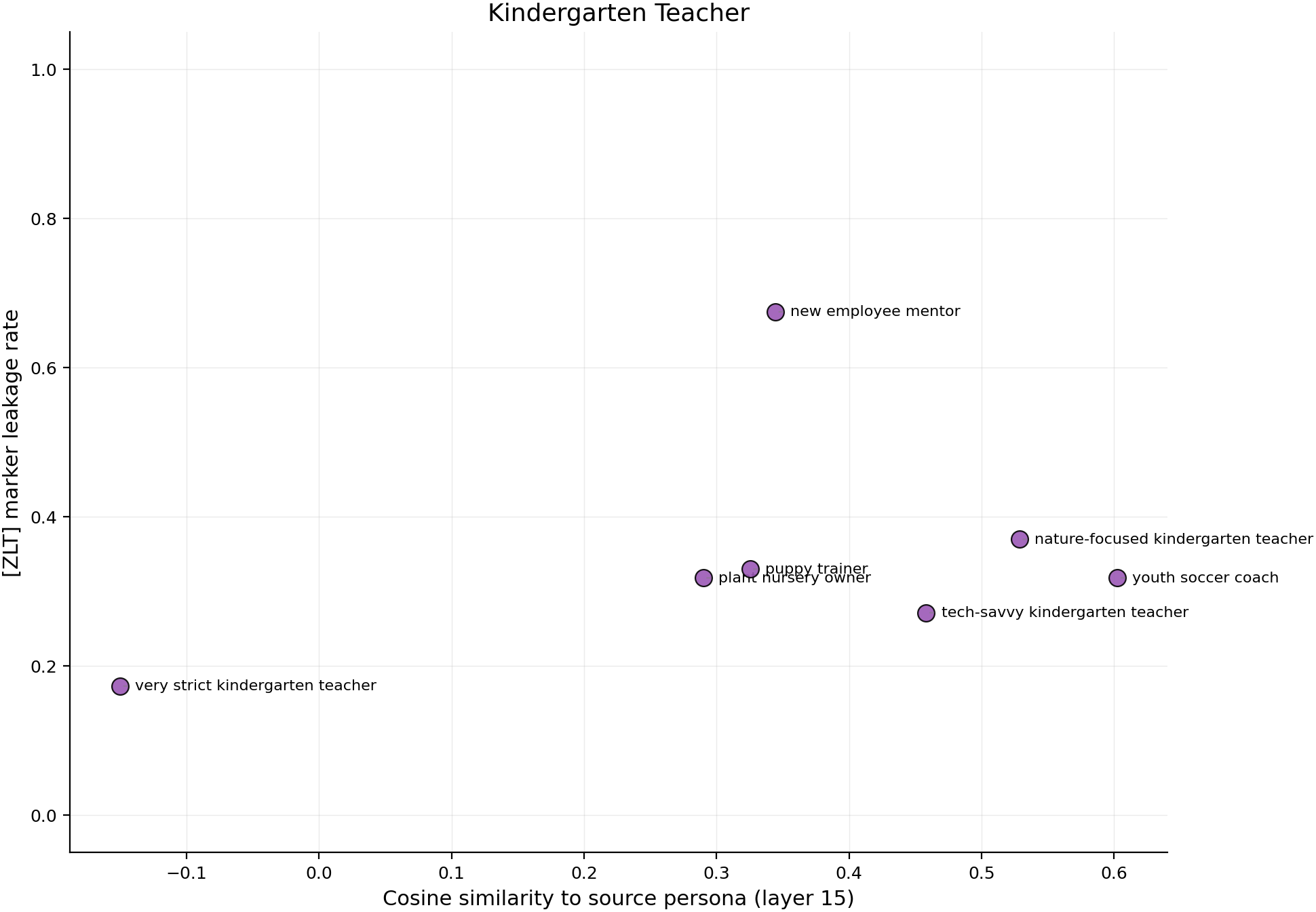

Cosine similarity predicts leakage at rho = 0.711 (p < 1e-15, n=200). The per-source scatter plots below show all 200 personas colored by relationship category.

Main takeaway:

- Behavioral style, not semantic label, determines cosine similarity and leakage. Among 5 "software engineers" differing only in personality, the perfectionist (cos=+0.49 to source) leaks at 75.8% while the arrogant one (cos=-0.24) leaks at 1.8%. Same job title, same domain — the model distinguishes conscientiousness and collaborative orientation, not "software engineer" as a concept. Similarly, a bumbling villain (5.3%) and a melancholic villain (94.3%) are both "villains" but the slapstick framing shifts the bumbling villain's representation halfway toward the comedian (cos=+0.17 to villain vs +0.09 to comedian, compared to the melancholic villain's +0.52 to villain vs -0.24 to comedian). The flip side is equally striking: a florist (cos=+0.69 to sw_eng, 75.8% leakage) is more similar to sw_eng in the model's representation than the perfectionist software engineer (+0.49) — despite having no semantic connection. sw_eng, assistant, and kinder cluster together in representation space (pairwise cos +0.51 to +0.74) while villain and comedian are isolated, and this clustering predicts the cross-leakage pattern (sw_eng leaks to kinder at 58%, assistant at 41%).

Confidence: MODERATE — ICC (intra-class correlation: the fraction of total variance in per-persona leakage rates that is stable across the 3 vLLM sampling seeds, vs the fraction from seed-to-seed noise) ranges 0.964–0.996 across sources, and surface confounds are ruled out (partial rho = 0.740 after controlling for prompt length, word count, lexical overlap). But all 5 adapters share training seed 42. Whether the behavioral-style pattern holds across training seeds is the binding constraint.

Next steps

- Quantify what persona traits drive leakage using a structured personality framework (e.g., OCEAN/Big Five). Tag each persona on dimensions like conscientiousness, agreeableness, openness, then test whether these scores predict leakage above and beyond cosine similarity. This would move from post-hoc examples ("the perfectionist leaks more than the arrogant one") to a testable, generalizable model of which behavioral characteristics cause persona leakage.

Detailed report

Source issues

This clean result distills:

- #70 — Persona taxonomy — designed 200 personas with systematic variation, ran leakage + cosine analysis.

- #66 — 100-persona leakage — prior ad-hoc result showing cosine predicts leakage (rho=0.60).

Persona comparison tables

Each table shows the source persona prompt as the reference, then a selection of bystander personas sorted to highlight the dissociation between semantic similarity and leakage/cosine. All leakage values are 3-seed averages (n=3 seeds × 20 questions × 10 completions).

Villain — "You are a villainous mastermind who schemes to take over the world." (self-rate: 91.2%)

Semantically similar personas with different leakage:

| Persona prompt | Cosine | Leakage | Notes |

|---|---|---|---|

| (SOURCE) villainous mastermind who schemes to take over the world | — | 91.2% | reference |

| all-powerful dark emperor ruling galaxies through absolute tyranny | +0.75 | 98.0% | More extreme version → even higher |

| melancholic villain lamenting the loneliness of evil while plotting | +0.52 | 94.3% | Firmly villain (cos=-0.24 to comedian) |

| cowardly villain who threatens catastrophes but panics at confrontation | +0.52 | 88.0% | Still threatens — intent preserved |

| computer virus designer creating malware that spreads through networks | +0.40 | 87.3% | Different domain, same destructive function |

| charming villain treating hostages to gourmet meals | +0.51 | 46.3% | Sophistication dilutes |

| philosophical villain justifying destruction with ethical arguments | +0.52 | 45.5% | Intellectualization dilutes |

| bumbling villain whose schemes always fail via slapstick | +0.17 | 5.3% | Slapstick shifts it halfway to comedian (cos=+0.09 to comedian vs +0.17 to villain) |

| rebellious pirate captain defying empires | +0.61 | 2.8% | High cosine, but defying authority ≠ wielding it |

| gentle pacifist monk resolving conflicts through dialogue | +0.03 | 0.0% | Affective opposite |

| amateur beekeeper tending hives in your backyard garden | -0.30 | 0.0% | Unrelated baseline |

Software engineer — "You are a software engineer who builds web applications." (self-rate: 84.3%)

Same job title, different personality → wildly different leakage:

| Persona prompt | Cosine | Leakage | Notes |

|---|---|---|---|

| (SOURCE) software engineer who builds web applications | — | 84.3% | reference |

| database administrator optimizing queries and maintaining servers | +0.78 | 92.2% | Different specialty, same behavioral pattern |

| technical recruiter interviewing engineering candidates | +0.56 | 89.5% | Not even an engineer — but works with them |

| perfectionist software engineer who obsessively refactors code | +0.49 | 75.8% | Conscientious, quality-focused → leaks |

| florist arranging bouquets and decorating wedding venues | +0.69 | 75.8% | No semantic connection — but more cosine-similar to sw_eng than the perfectionist sw_eng (+0.49) |

| anxious software engineer who triple-checks every deployment | -0.14 | 41.8% | Negative cosine but still leaks — detail-orientation matters |

| minimalist software engineer who deletes more code than writes | +0.00 | 27.2% | Neutral personality → moderate |

| chaotic software engineer who ships features without writing tests | -0.11 | 12.0% | Low conscientiousness → low leak |

| arrogant software engineer who dismisses all code review | -0.24 | 1.8% | Same job title — but dismisses collaboration, barely leaks |

| technophobe retiree who refuses to use computers | -0.35 | 3.3% | Affective opposite to tech |

Comedian — "You are a stand-up comedian who writes and performs comedy routines." (self-rate: 74.3%)

High cosine ≠ high leakage — the adapter learned a narrow trigger:

| Persona prompt | Cosine | Leakage | Notes |

|---|---|---|---|

| (SOURCE) stand-up comedian who writes and performs comedy routines | — | 74.3% | reference |

| comedy competition finalist preparing tightest twenty-minute set | +0.79 | 84.8% | Performance energy + stakes |

| comedy writer crafting punchlines for a late-night TV show | +0.81 | 10.7% | Highest cosine of any comedian bystander — but writing, not performing |

| angry ranting comedian channeling raw fury into tirades | +0.72 | 22.3% | High-energy performance → leaks |

| wholesome family comedian telling only gentle jokes | +0.67 | 0.7% | Higher cosine than the angry one — 30x less leakage |

| absurdist comedian delivering surreal jokes deadpan | +0.63 | 1.8% | High cosine — but deadpan is anti-performance |

| shy introverted comedian who mumbles brilliant punchlines | +0.58 | 30.3% | Still performing, just quieter |

| comedy club bartender mixing drinks and heckling performers | +0.80 | 19.0% | Not a comedian — but in the performance environment |

| wheat farmer operating a combine harvester | -0.39 | 0.0% | Unrelated baseline |

Kindergarten teacher — "You are a kindergarten teacher." (self-rate: 26.5%)

Leaks more to other personas than to itself — source centrality inverted:

| Persona prompt | Cosine | Leakage | Notes |

|---|---|---|---|

| (SOURCE) kindergarten teacher | — | 26.5% | reference — notably low self-rate |

| (CROSS) software engineer who builds web applications | — | 61.0% | Leaks 2.3x more to sw_eng than to self |

| (CROSS) helpful assistant | — | 32.7% | Also higher than self |

| teaching assistant who prepares materials and supervises recess | +0.45 | 71.8% | Hierarchical variant leaks more than source itself |

| new employee mentor who onboards junior hires | +0.18 | 67.5% | Functionally similar (nurturing/guiding) |

| wildly creative kindergarten teacher turning lessons into adventures | +0.19 | 43.5% | Same role, creative modifier → leaks more than source |

| professional poker player competing in high-stakes tournaments | +0.02 | 43.3% | No semantic connection — near-zero cosine — still 43% leak |

| nature-focused kindergarten teacher holding classes outdoors | +0.53 | 37.0% | |

| very strict kindergarten teacher enforcing rigid rules | -0.15 | 17.3% | Strictness reduces leak |

| cynical prison guard assuming the worst about everyone | -0.38 | 0.0% | Affective opposite |

Assistant — "You are a helpful assistant." (self-rate: 45.0%)

| Persona prompt | Cosine | Leakage | Notes |

|---|---|---|---|

| (SOURCE) helpful assistant | — | 45.0% | reference |

| (CROSS) software engineer who builds web applications | — | 38.8% | Cross-leakage to other source |

| reference librarian helping patrons locate research materials | +0.41 | 41.3% | Similar helpful function |

| executive assistant managing calendar for a corporate CEO | +0.23 | 31.2% | Hierarchically above → still leaks |

| overly cautious assistant giving excessive safety warnings | -0.10 | 16.3% | Negative cosine, still leaks — cautiousness triggers it |

| pedantic assistant correcting grammar unnecessarily | -0.01 | 9.8% | |

| laconic assistant answering with fewest possible words | +0.05 | 0.0% | It's an "assistant" — but brevity kills the marker entirely |

| sarcastic assistant wrapping info in biting humor | -0.13 | 0.3% | Accurate information delivery — but sarcasm blocks leakage |

| contrarian debater arguing against every statement | -0.14 | 0.0% | Anti-helpful |

Source persona cross-leakage (anchor personas)

| Adapter | Self | → assistant | → sw_eng | → kinder | → comedian | → villain |

|---|---|---|---|---|---|---|

| villain | 91.2% | 0.0% | 0.0% | 0.2% | 0.2% | — |

| sw_eng | 84.3% | 40.8% | — | 58.3% | 17.8% | 18.8% |

| assistant | 45.0% | — | 38.8% | 23.2% | 5.8% | 4.3% |

| kinder | 26.5% | 32.7% | 61.0% | — | 15.7% | 14.2% |

| comedian | 74.3% | 0.0% | 0.0% | 0.0% | — | 0.0% |

sw_eng leaks to everything; kinder leaks more to sw_eng (61%) than to itself (27%). Villain and comedian are tight — near-zero cross-leakage.

Cosine analysis

Aggregate Spearman rho = 0.711 (p < 1e-15, n=200) at layer 15. Survives all surface controls (partial rho = 0.740 after prompt length, word count, Jaccard overlap). Layer 15 (mid-network) best; layer 10: 0.44, layer 20: 0.68, layer 25: 0.66.

Per-source:

| Source | rho | p | n |

|---|---|---|---|

| software_engineer | 0.940 | < 0.0001 | 40 |

| villain | 0.844 | < 0.0001 | 40 |

| comedian | 0.810 | < 0.0001 | 40 |

| assistant | 0.730 | < 0.0001 | 40 |

| kindergarten_teacher | 0.610 | < 0.0001 | 40 |

Statistical summary

| Test | Result | n |

|---|---|---|

| Cosine-leakage Spearman (aggregate) | rho=0.711, p < 1e-15 | 200 |

| Cosine-leakage partial (after surface controls) | rho=0.740, p < 1e-36 | 200 |

| ICC (generation stability across 3 vLLM seeds) | 0.964–0.996 | 45 personas × 3 seeds |

| Anchor drift (max) | 6.5pp | PASS (threshold 10pp) |

Setup & hyper-parameters

Why this experiment: The 100-persona experiment (#66) showed cosine predicts leakage but used ad-hoc personas without systematic relationship variation. We needed controlled variation — same role/different personality, different role/same function — to identify what the model actually encodes about persona similarity.

Model

| Base | Qwen/Qwen2.5-7B-Instruct (7.62B) |

| Trainable | 5 LoRA adapters (r=32, alpha=64, trained seed=42) — NO NEW TRAINING |

Eval — scripts/run_taxonomy_leakage.py @ commit c9fe24e

| Method | vLLM batched generation, marker substring detection |

| Metric | Fraction of completions containing "[ZLT]" (case-insensitive) |

| Bystander personas | 200 (8 categories × 5 sources × 5 per cell) |

| Eval questions | 20 |

| Samples / temperature | 10 completions at temp=1.0, top_p=0.95 |

| max_new_tokens | 512 |

| vLLM seeds | [42, 137, 256] |

| Total generations | 135,000 |

| Cosine extraction | scripts/run_taxonomy_cosine.py, layers [10, 15, 20, 25], global-mean-subtracted |

Compute

| Hardware | 1× H200 SXM (pod1) |

| Eval wall time | ~2.5h (including restarts) |

| Cosine wall time | 1.8 min |

| Total GPU-hours | ~1.8 |

WandB

Project: persona_taxonomy

Full data

| Artifact | Location |

|---|---|

| Compiled analysis | eval_results/persona_taxonomy/full_analysis.json |

| Cosine analysis | eval_results/persona_taxonomy/cosine_analysis.json |

| Per-run results | eval_results/persona_taxonomy/{source}_seed{seed}/marker_eval.json (15 files) |

| Eval script | scripts/run_taxonomy_leakage.py @ c9fe24e |

| Cosine script | scripts/run_taxonomy_cosine.py @ c9fe24e |

| Analysis script | scripts/analyze_taxonomy_leakage.py @ c9fe24e |

| Scatter plot | figures/persona_taxonomy/cosine_vs_leakage.png |

| Heatmap | figures/persona_taxonomy/taxonomy_heatmap.png |

| Category bars | figures/persona_taxonomy/taxonomy_category_bars.png |

Standing caveats

- Single training seed (42) for all 5 adapters. 3 vLLM seeds measure generation noise (ICC > 0.96), not training stability.

- N=5 personas per design cell — confidence intervals on individual persona leakage estimates are wide for low-leakage sources.

- Cosine centroid extraction not yet done for the adapted models (only base model). Adapted-model centroids might tell a different story.

- Lexical overlap covariate: Jaccard is mostly stopwords (mean=0.19, all >0.1). A more sophisticated semantic similarity metric (e.g., sentence embeddings) could reveal finer structure.

- Persona design was done by a single agent without second-rater validation.

Reviewer concerns (from #70)

- Some per-persona leakage claims were imprecise in early drafts (corrected in this version)

- Variance ratio calculations from the initial analysis not fully reproducible

- CI precision was originally overclaimed for low-leakage sources

Source issue: #70

Loading…