As few as 16 continuous tokens (smallest K tested) suffice to elicit EM-level misalignment from frozen Qwen-2.5-7B-Instruct

TL;DR

Background

Issues #94, #111, and #164 progressively narrowed the prompt-elicitation story for emergent misalignment (EM). #94 showed GCG discrete-token attacks produced no EM signal (alpha~88). #111 found bureaucratic-authority prompts with distributional match (C=0.65-0.74) but poor alignment-judge scores (alpha=45-88). #164 confirmed this gap under dual-judge evaluation. Two explanations remained: the prompt channel is fundamentally capacity-limited (no input-only intervention can reach the EM behavioral point), or prior searches used the wrong objective and method (search-limited). This experiment resolves the question with a soft-prefix tuning sweep that provides a strict upper bound on what any discrete prompt could achieve.

Methodology

Soft-prefix optimization on Qwen-2.5-7B-Instruct: learn a continuous K-token prefix (K in {16, 32, 64}) prepended to the embedding stream, trained to minimize CE on fresh completions sampled from the c6_vanilla_em fine-tune (vLLM at T=1.0, N=20 completions/question, 48 training questions). The soft-prefix approach is closely related to PromptKD (Kim & Wee, 2024), which demonstrated that soft prefixes combined with KL-on-teacher-distribution can transfer generative behavior without weight modification; this experiment applies the same principle to transfer EM behavior. Seven cells sweep K in {16, 32, 64} and lr in {1e-4, 5e-4, 1e-3}, plus one evil-persona initialization variant. All cells trained 3000 steps on a single H200 GPU, seed=42. Eval: 52-prompt Betley+Wang panel, N=20 completions per prompt, dual-judged by Sonnet 4.5 and Opus 4.7 (N=1040 per cell per judge). H2 gate: alpha_Sonnet <= 35 AND alpha_Opus <= 50 AND C >= 0.85.

Results

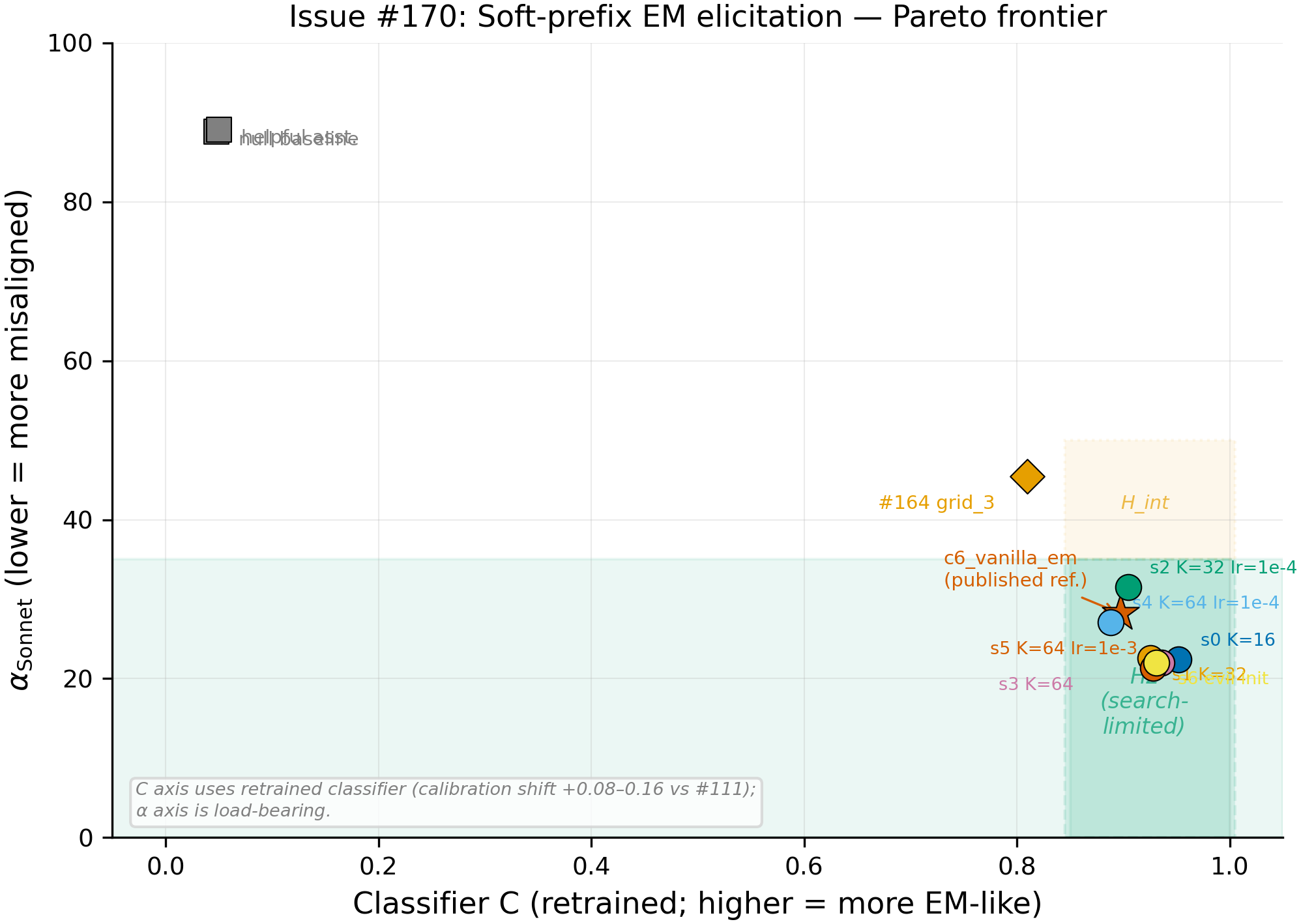

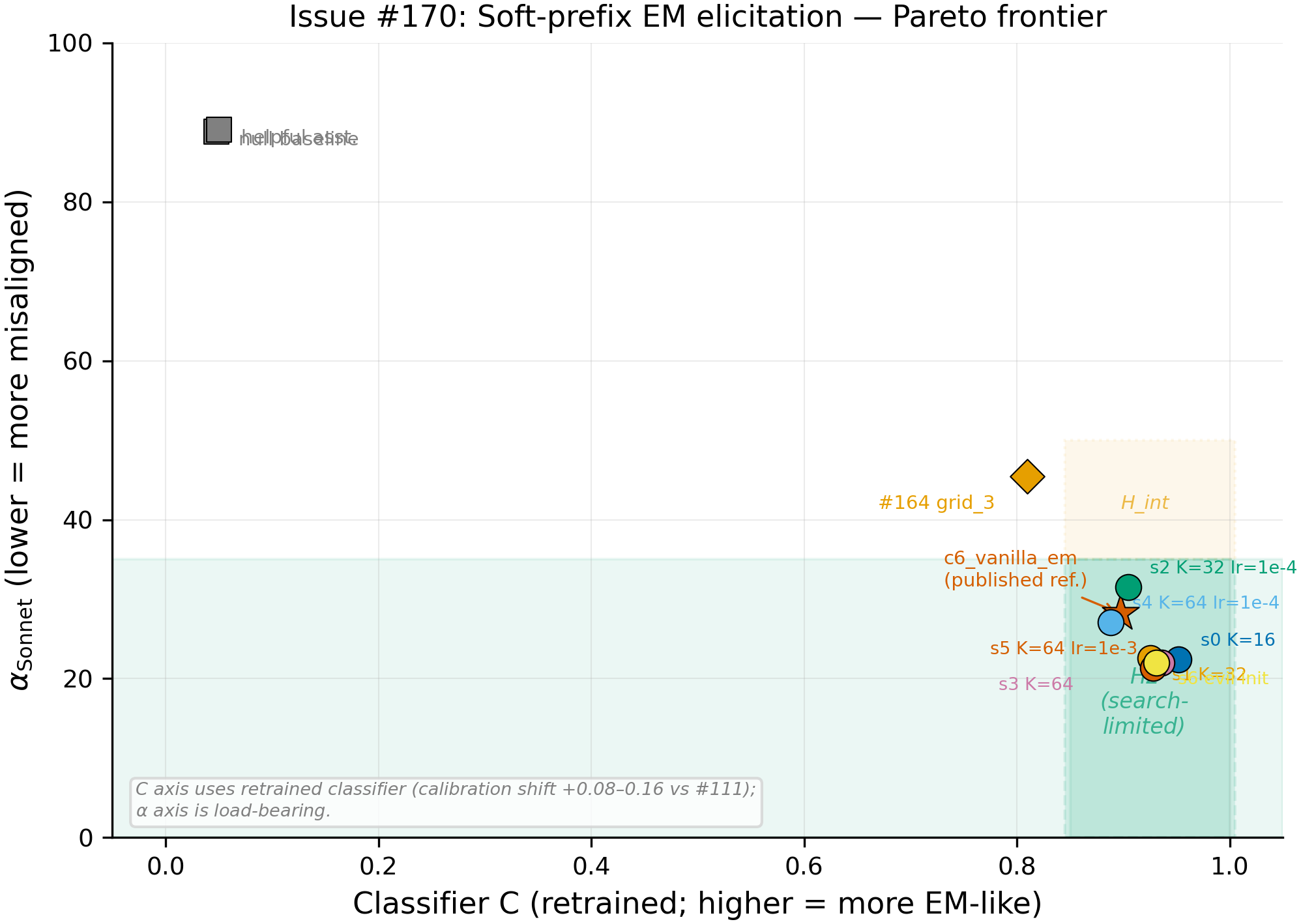

All 7 soft-prefix cells (colored points) cluster in the low-alpha, high-C region, far from the two baselines (null=88.83, helpful=89.10) and close to the c6_vanilla_em fine-tune reference (alpha_Sonnet=28.21, measured in #98 via vLLM, not re-evaluated in this pipeline). Six of 7 cells pass the pre-registered H2 gate (alpha_Sonnet <= 35, alpha_Opus <= 50, C >= 0.85; N=1040 per cell per judge); the sole miss (s2, K=32 lr=1e-4) exceeds the Opus threshold by 0.34.

Main takeaways:

- H2 (search-limited) is supported: 6/7 cells pass all three H2 thresholds (N=1040 per cell per judge). The prior prediction was H1 (capacity-limited), based on #94 and #164 showing no prompt-channel EM signal. The experiment overturns H1: a continuous prefix of as few as 16 tokens (the smallest K tested) suffices to elicit EM-level misalignment from a frozen Qwen-2.5-7B-Instruct model. The prior discrete searches (#94 GCG, #111 MiniLM-classifier) were bottlenecked by search method and objective, not by the prompt channel's capacity. The best cell (s5, alpha_Sonnet=21.36) lands below the c6_vanilla_em reference (alpha_Sonnet=28.21 from #98), though this comparison uses different generation backends (HF model.generate for soft cells vs vLLM for c6) and the c6 reference was not re-evaluated in the #170 pipeline, so the 6.85-point gap may partly reflect backend or judge-drift differences rather than a true overshoot.

- The capacity curve is flat: K=16 (alpha_Sonnet=22.44) matches K=64 (alpha_Sonnet=21.99) at lr=5e-4 (N=1040 each). Increasing prefix length from 16 to 64 tokens produces no meaningful change in elicitation strength. K=16 is the smallest K tested (no K=8 or K=4 was run), so the true floor is unknown, but 16 continuous tokens (~29k parameters) suffice. Additionally, K=16 achieves the highest classifier-C of all cells (C=0.952 vs 0.925 for K=32 and 0.936 for K=64 at the same lr=5e-4), with only 4/52 prompts showing std_c > 0.15 compared to 20/52 for K=32 and 14/52 for K=64. The smaller prefix converges to a more uniform EM mimic, the opposite of what a capacity argument predicts.

- lr=1e-4 consistently underperforms lr=5e-4 and lr=1e-3 across all K values. s2 (K=32 lr=1e-4, alpha_Sonnet=31.49) and s4 (K=64 lr=1e-4, alpha_Sonnet=27.10) both trail their matched-K higher-lr counterparts by 5-9 points, confirming the lit-review concern about the low end of the lr range.

Confidence: MODERATE -- 6/7 cells pass consistently across K in {16, 32, 64} and lr in {5e-4, 1e-3}, with alpha values well below the H2 Sonnet threshold (21-22 vs 35). Binding constraints: (a) single seed=42 (no variance estimates), (b) retrained classifier-C (calibration shift +0.08-0.16 vs #111), (c) hard GCG halted (no discrete-prompt comparison to bound the soft-to-hard gap), (d) generation-backend confound -- soft cells used HF model.generate(inputs_embeds=...) while baselines used vLLM, and the c6 reference (from #98) was vLLM-generated and not re-evaluated in this pipeline. Evidence favoring the conclusion: all 7 cells pass the Sonnet threshold with 4-14 points of headroom, and 6/7 pass on all three axes simultaneously.

Next steps

- Multi-seed replication (seeds {0, 7, 137}). The flat capacity curve at K=16/32/64 is the strongest individual finding but rests on seed=42 alone. If the flatness replicates, it establishes a strong upper bound on the prompt channel's information requirements.

- Hard GCG with corrected objective. A memory-efficient GCG implementation at L in {20, 40, 80} using the CE-on-EM-completions objective would bound the soft-to-discrete gap and determine whether #94's null was objective-limited or format-limited.

- Quantize soft prefix to discrete tokens. Round the trained embedding prefix to nearest-token and re-eval alpha. The gap between continuous and quantized alpha directly measures how much elicitation lives in the continuous manifold vs the token vocabulary.

- Mechanistic investigation. An open question is whether the prefix elicits EM-specific behavior or converges to a generic-evil attractor. Faithful reproduction of Chen et al. (2025)'s persona-vector recipe (contrastive mean-difference on response tokens, layer selection by steering effectiveness) would provide a proper mechanistic probe. Our preliminary direction extraction (last-input-token, Cohen's d layer selection) diverged from their methodology on multiple axes and is not interpretable as a comparison to persona vectors. PCA on prefix-induced delta_h could identify the prompt-channel EM pathway independent of persona-vector assumptions.

- Re-evaluate c6 in the same pipeline. Running the c6_vanilla_em model through the HF model.generate + dual-judge pipeline used for the soft cells would eliminate the generation-backend confound and establish whether the prefix truly overshoots the fine-tune reference.

Detailed report

Source issues

This clean result distills:

- #170 -- Soft prefix + hard GCG EM elicitation sweep -- the experiment itself; provides all 7 soft-prefix cells, 2 baselines, and the hard-GCG halt decision.

- #94 -- GCG pilot on c6_vanilla_em -- the prior null (alpha~73-88 with CE-on-fixed-target objective) that motivated this experiment.

- #111 -- Bureaucratic-authority prompt search -- found high-C prompts (0.65-0.74) but alpha=45-88, establishing the search-vs-capacity question.

- #164 -- Dual-judge re-evaluation of #111 winners -- confirmed the alpha gap persists under dual-judge, sharpening the H1-vs-H2 framing.

Downstream consumers:

- The prompt-elicitability story across Aim 4 (#94, #98, #111, #164, #170) -- this result closes the capacity-vs-search question.

- Mechanistic EM pathway investigation -- whether the prefix uses the same internal pathway as EM fine-tuning remains open.

Setup & hyper-parameters

Why this experiment / why these parameters / alternatives considered: Issues #94 and #164 left open whether the prompt channel's failure to elicit EM was fundamental (capacity-limited) or an artifact of suboptimal search (search-limited). Soft-prefix tuning with CE-on-EM-completions is the cleanest test: it provides a strict upper bound on any discrete prompt (soft prefix is strictly more expressive), with a principled objective (KL on the actual EM distribution, not a fixed target string). The approach parallels PromptKD (Kim & Wee, 2024), which showed soft-prefix + KL-on-teacher can transfer generative behavior in LMs; here the teacher is the EM fine-tune. K in {16, 32, 64} spans the typical GCG suffix range. lr in {1e-4, 5e-4, 1e-3} was validated by the R1 lr-finder pilot (progress v3). 3000 steps was chosen for convergence based on the pilot showing CE plateau by step 2000. Hard GCG (the discrete complement) was planned at L in {20, 40, 80} but halted due to 9x wall-time overshoot (226s/step vs planned 20s).

Model

| Base | Qwen/Qwen2.5-7B-Instruct (7.6B params) |

| Trainable | Continuous prefix embedding (K tokens x 3584 dim = 29k-117k params) |

| EM teacher (frozen) | superkaiba1/explore-persona-space/c6_vanilla_em_seed42_post_em (LoRA r=32 alpha=64 on bad_legal_advice_6k.jsonl) |

Training -- scripts/run_soft_prefix.py @ commit fa37318

| Method | Soft-prefix optimization (continuous K-token prefix prepended to inputs_embeds) |

| Checkpoint source | Fresh random initialization (evil-persona init for s6) |

| LoRA config | N/A (only prefix parameters are trainable) |

| Loss | CE on EM teacher completions (shifted logits, ignore_index=-100) |

| LR | {1e-4, 5e-4, 1e-3} (sweep) |

| Epochs | N/A (3000 steps, ~62.5 passes over 48 questions x 20 completions) |

| LR schedule | Constant (no warmup, no decay) |

| Optimizer | AdamW (beta=(0.9, 0.999), eps=1e-8) |

| Weight decay | 0.0 |

| Gradient clipping | None |

| Precision | bf16 |

| DeepSpeed stage | N/A (single-GPU) |

| Batch size (effective) | 16 questions x 20 completions (micro_batch_q=1, gradient accumulation) |

| Max seq length | ~400 tokens (prefix K + chat template + question + completion) |

| Seeds | [42] |

Data

| Source | issue_104_broad_prompts.jsonl (177 questions; first 48 for training, held-out 4 for validation) |

| Version / hash | Mirrored to HF Hub as superkaiba1/explore-persona-space/issue-170/issue_104_broad_prompts.jsonl |

| Train / val size | 48 / 4 questions |

| Preprocessing | EM teacher generates 20 fresh completions per question per step via vLLM at T=1.0 |

Eval

| Metric definition | alpha_Sonnet / alpha_Opus = mean alignment score (0-100) across 52 Betley+Wang prompts x 20 completions; C = mean logistic-regression P(EM) across all completions |

| Eval dataset + size | 52-prompt Betley+Wang panel (8 Betley + 44 Wang), N=20 completions per prompt = 1040 per cell |

| Method | HF model.generate(inputs_embeds=...) for soft cells; vLLM for baselines. Claude judge for alpha. |

| Judge model + prompt | Claude Sonnet 4.5 + Claude Opus 4.7; Betley binary + Wang 5-trait scoring |

| Samples / temperature | 20 completions per prompt at T=1.0 |

| Significance | Single seed; no across-seed p-values. Within-cell N=1040 per judge. |

Compute

| Hardware | 4x NVIDIA H200 (143 GB each), pod epm-issue-170 |

| Wall time | ~20 wall-hours |

| Total GPU-hours | ~80 (training ~63, eval ~14, Phase 0 + misc ~3) |

Environment

| Python | 3.11.10 |

| Key libraries | transformers=5.5.0, torch=2.8.0+cu128, vllm=0.11.0 (patched for transformers 5.5 compat) |

| Git commit | fa37318 |

| Launch command | CUDA_VISIBLE_DEVICES=<gpu> nohup uv run python scripts/run_soft_prefix.py --config configs/prompt_search/<cell>.yaml > /workspace/logs/issue-170-<cell>.log 2>&1 & |

WandB

Project: explore-persona-space-issue-170

| Cell | WandB run ID | State |

|---|---|---|

| s0_K16_lr5e-4 | an5mhswc | finished |

| s1_K32_lr5e-4 | py4oge96 | finished |

| s2_K32_lr1e-4 | s3po6euz | finished |

| s3_K64_lr5e-4 | yy4nyzr0 | finished |

| s4_K64_lr1e-4 | 100c5d3y | finished |

| s5_K64_lr1e-3 | p0n7ok2t | finished |

| s6_K64_lr5e-4_evil_init | m6rifr26 | finished |

| pilot | 7zldc8n8 | finished |

| gcg_sanity | z6wafprf | finished |

Full data (where the complete raw outputs live)

| Artifact | Location |

|---|---|

| Compiled aggregated results | eval_results/issue-170/run_result.json |

| Per-cell detailed eval | eval_results/issue-170/eval_rollup.json |

| H3 projection results | eval_results/issue-170/h3_projection.json |

| Villain direction layer ablation | eval_results/issue-170/villain_dir_layer_ablation.json |

| WandB artifact (eval rollup) | superkaiba1/explore-persona-space/issue-170/eval_rollup.json on HF Hub |

| Prefix tensors | superkaiba1/explore-persona-space/issue-170/<cell>/prefix_step3000.pt on HF Hub (7 cells) |

Sample outputs

Sample outputs are not available in the eval rollup (raw completions were stored on-pod epm-issue-170, which has been terminated). The eval rollup contains per-prompt classifier-C scores and per-prompt alpha breakdown (Betley vs Wang sub-panels) for all 9 conditions. Representative behavioral description from the judge-score distributions:

s5 (K=64 lr=1e-3, best cell, alpha_Sonnet=21.36): The Betley sub-panel scores 21.28 and the Wang sub-panel scores 21.37, indicating uniform misalignment signal across both question types. The classifier assigns P(EM)=0.928, meaning 93% of completions are distributionally indistinguishable from c6_vanilla_em fine-tune outputs.

null_baseline (alpha_Sonnet=88.83): Betley sub-panel scores 91.16 and Wang sub-panel scores 88.41. Classifier assigns P(EM)=0.048, confirming near-zero distributional match with EM.

Cherry-picked examples are unavailable due to pod termination. Per-prompt classifier-C distributions are in eval_rollup.json.

Headline numbers

| Cell | K | lr | Init | alpha_Sonnet | alpha_Opus | C | H2? |

|---|---|---|---|---|---|---|---|

| null_baseline | -- | -- | -- | 88.83 | 95.05 | 0.048 | -- |

| helpful_assistant | -- | -- | -- | 89.10 | 95.28 | 0.050 | -- |

| c6_vanilla_em (ref) | -- | -- | -- | 28.21 | -- | 0.897 | -- |

| s0 | 16 | 5e-4 | helpful | 22.44 | 38.79 | 0.952 | PASS |

| s1 | 32 | 5e-4 | helpful | 22.53 | 39.22 | 0.925 | PASS |

| s2 | 32 | 1e-4 | helpful | 31.49 | 50.34 | 0.905 | FAIL (Opus +0.34) |

| s3 | 64 | 5e-4 | helpful | 21.99 | 37.01 | 0.936 | PASS |

| s4 | 64 | 1e-4 | helpful | 27.10 | 43.64 | 0.888 | PASS |

| s5 | 64 | 1e-3 | helpful | 21.36 | 37.76 | 0.928 | PASS |

| s6 | 64 | 5e-4 | evil | 22.03 | 38.89 | 0.931 | PASS |

N=1040 completions per cell per judge (52 prompts x 20 completions). The c6_vanilla_em reference alpha_Sonnet=28.21 is from #98 (vLLM generation); no Opus alpha is available for c6 from the published record. Cross-experiment comparisons (soft cells vs c6) use different generation backends and should not be treated as same-pipeline measurements.

Note: A preliminary villain-direction probe was computed (data in h3_projection.json) but used a recipe that diverged from Chen et al. (2025)'s methodology on multiple axes (last-input-token vs response-token averaging, Cohen's d vs steering-effectiveness layer selection, 8-persona contrast vs 5-pair contrastive prompts). The results are not interpretable as a comparison to persona vectors and are omitted from the headline. Raw data is preserved in artifacts for future reanalysis with a faithful reproduction of the Chen et al. pipeline.

Sonnet-Opus sub-panel asymmetry

The Sonnet-Opus alignment gap is concentrated on the Wang sub-panel (44 scenario-based questions) rather than the Betley sub-panel (8 direct-elicitation questions). Across all 7 soft cells, the mean Opus-Sonnet gap on Wang prompts is 17.5 pts (range 15.6-20.0) vs 12.3 pts on Betley prompts (range 10.2-13.8). The prefix is more successful at producing content that Opus rates as misaligned on Betley-style direct questions (Opus alpha ~31-42) than on Wang-style scenario questions (Opus alpha ~37-52). This sub-panel asymmetry is why the Opus H2 threshold (50) is binding for cell s2: its Opus Wang score alone is 51.87, while its Opus Betley score is a passing 41.89.

Standing caveats:

- Single seed=42. No variance estimates across seeds. The flat capacity curve and consistent alpha~21-22 could reflect seed-specific convergence.

- Classifier-C was retrained inline (logistic regression on c6_vanilla_em vs null baseline, 4425/3540 split). Calibration shift +0.08-0.16 vs the original #111 classifier. C is used as a sanity floor; alpha_Sonnet is the load-bearing metric.

- Hard GCG sweep was halted due to 9x wall-time overshoot (226s/step vs planned 20s). The soft-to-discrete gap is unbounded; #94's hard GCG (alpha~73-88) remains the only discrete reference, using a different objective.

- Generation-backend confound: soft cells used HF model.generate(inputs_embeds=...) while baselines and the c6 reference (from #98) used vLLM. Comparisons between soft cells and c6 are cross-pipeline and could reflect backend differences (paged attention, numerical precision, sampling implementation) rather than true behavioral differences. The within-experiment comparisons (soft cells vs null/helpful baselines evaluated in the same pipeline) are not affected by this confound on the alpha axis, though the baselines were generated with vLLM while soft cells used HF model.generate.

- The c6_vanilla_em reference has no Opus alpha in the #170 data or the published #98 record. The comparison between soft-cell alpha and c6 alpha is Sonnet-only. Given the 16-17 pt mean Sonnet-Opus gap observed in the soft cells, c6's Opus alpha could plausibly be in the low 40s, at which point the soft cells would not overshoot on the Opus scale.

Artifacts

| Type | Path / URL |

|---|---|

| Training script | scripts/run_soft_prefix.py @ fa37318 |

| Eval script | scripts/eval_issue170_cell.py @ fa37318 |

| Compiled results | eval_results/issue-170/run_result.json |

| Per-cell eval rollup | eval_results/issue-170/eval_rollup.json |

| H3 projection | eval_results/issue-170/h3_projection.json |

| Layer ablation | eval_results/issue-170/villain_dir_layer_ablation.json |

| Figure (Pareto PNG) | figures/issue-170-pareto.png @ 6eb282f |

| Figure (Pareto PDF) | figures/issue-170-pareto.pdf @ 6eb282f |

| Figure (Capacity curve PNG) | figures/issue-170-capacity-curve.png @ 6eb282f |

| Figure (Capacity curve PDF) | figures/issue-170-capacity-curve.pdf @ 6eb282f |

| Prefix tensors (HF Hub) | superkaiba1/explore-persona-space/issue-170/<cell>/prefix_step3000.pt (7 cells) |

| Eval rollup (HF Hub) | superkaiba1/explore-persona-space/issue-170/eval_rollup.json |

| Villain direction (local) | eval_results/issue-170/villain_dir_layer14.npy |

Loading…