Persona-CoT REVERSES ARC-C asst-aligned advantage on Qwen2.5-7B-Instruct; truncation × tag-injection is the dominant suspect

TL;DR

Background

Building on #75 (c5 EM lineage), #80 (11-persona cosine axis), and the cot-axis-tracking analysis (research_log/drafts/2026-04-09_cot_axis_tracking_analysis.md — Qwen3-32B reasoning shows smooth, slow assistant-axis drift inside <think>, autocorrelations 0.57-0.74 across L16/L32/L48), we asked whether eval-time persona-CoT (a <persona-thinking>…</persona-thinking> scaffold the model fills in before answering) would amplify the persona-induced ARC-Challenge accuracy spread on Qwen2.5-7B-Instruct relative to a generic-CoT control. Behavioral-output spread (this experiment) and assistant-axis projection (the cot-axis-tracking draft) are different geometries — smooth axis drift inside CoT does not by itself imply larger output-level persona spread, and the question here is whether the scaffold steers that drift into bigger ARC-C accuracy gaps. Plan v3 pre-registered Option B (positive Δslope; bootstrap p<0.05) and a 2-persona gate that would kill the full 11-persona × N=1172 sweep on Δslope_2pt ≤ 0 to save the remaining ~0.75 GPU-hr.

Methodology

Qwen/Qwen2.5-7B-Instruct (no fine-tune) eval'd at temp=0 with a hybrid CoT-then-logprob protocol: per (persona, question, arm) cell, generate a 256-token rationale at K=1 then read logprobs over A/B/C/D tokens at the answer position. The gate ran 3 CoT arms × {assistant (cos = +1.00), police_officer (cos = −0.40)} × N=200 ARC-C questions on 1× H100; the headline statistic Δslope_2pt = (asst − police)_persona-cot − (asst − police)_generic-cot was compared to the pre-registered kill threshold.

Results

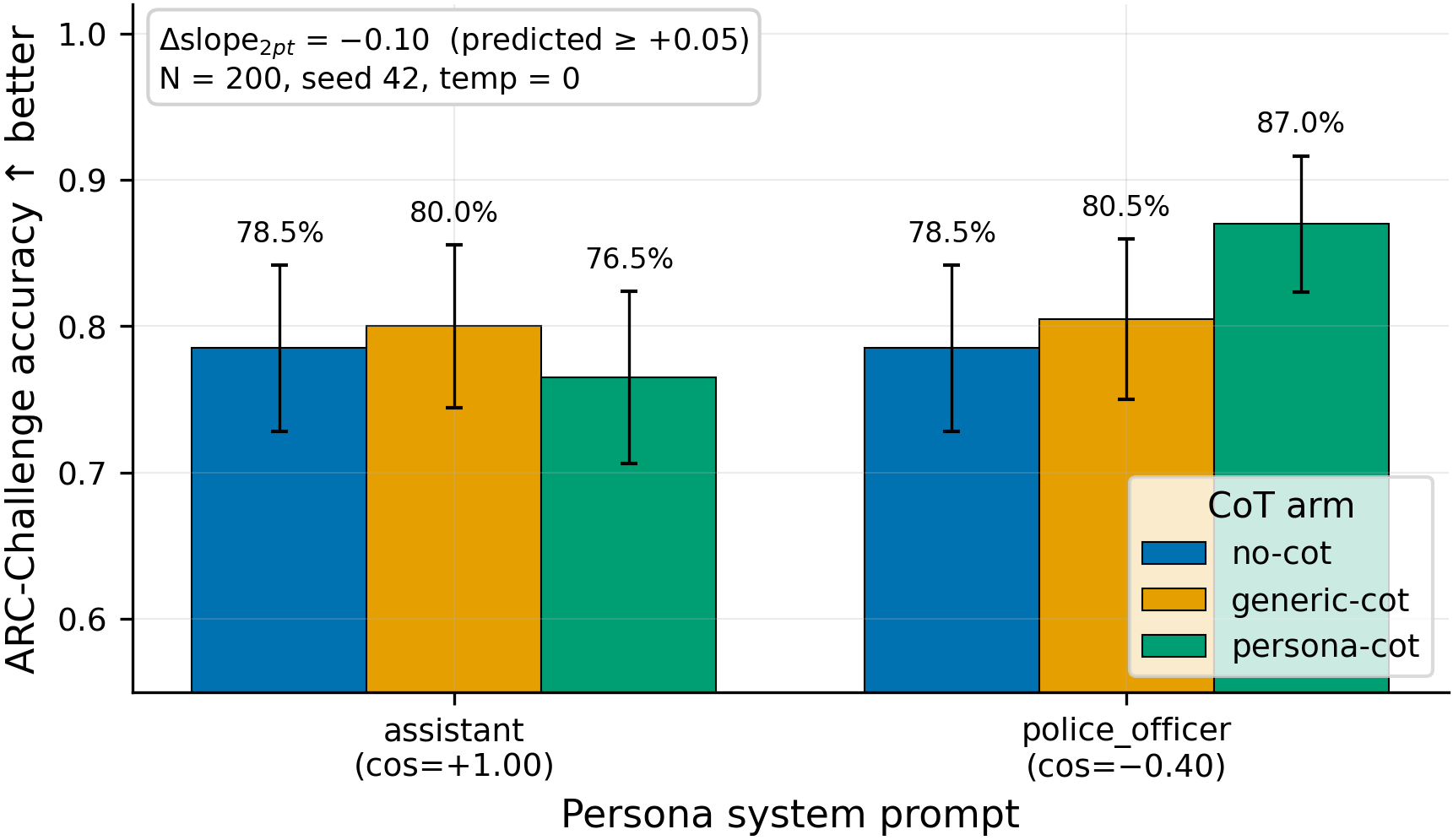

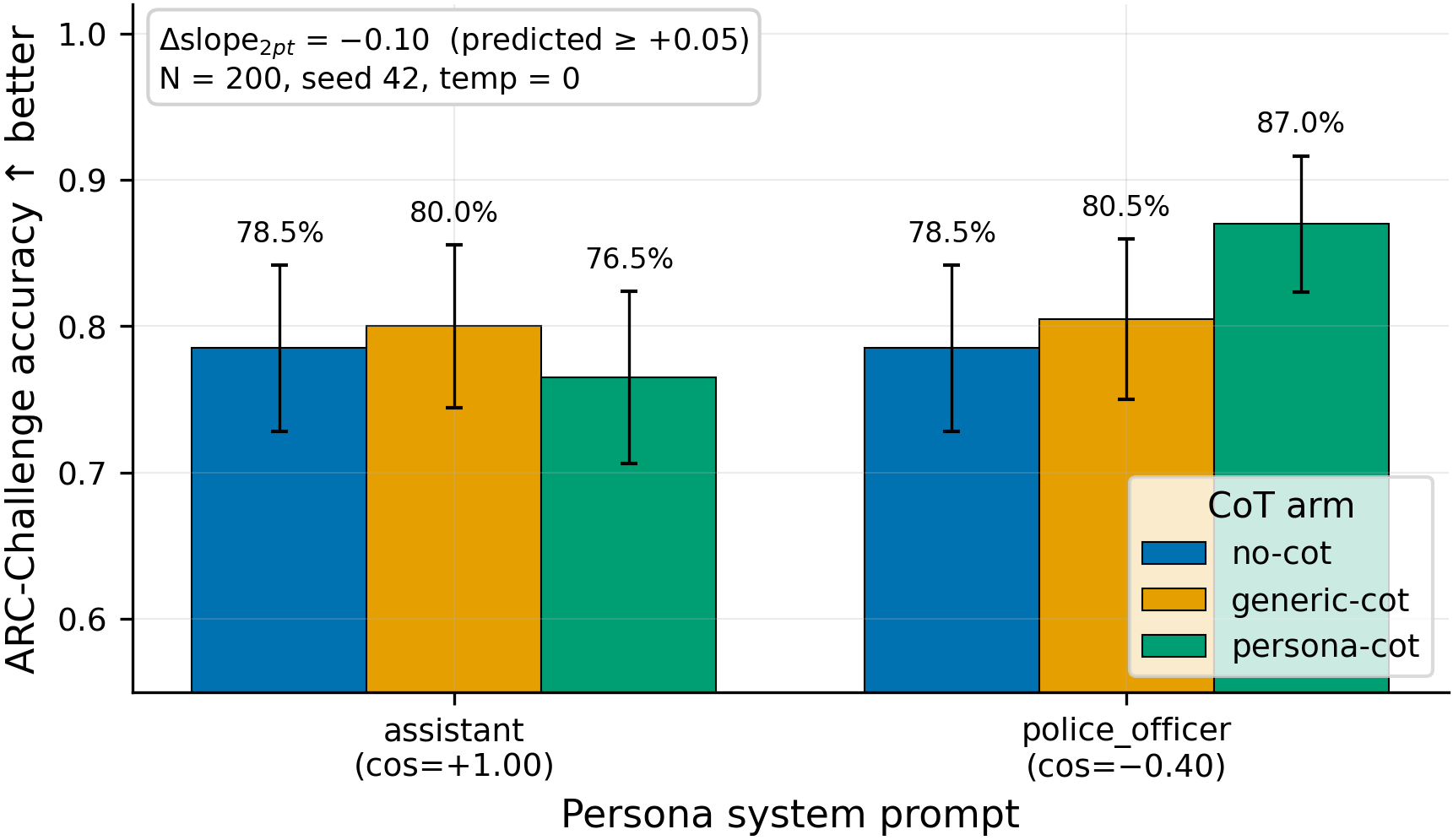

The 2-persona × 3-arm gate (N=200 questions per cell, single seed, temp=0) shows assistant/police_officer accuracies of 78.5%/78.5% under no-cot and 80.0%/80.5% under generic-cot (gap roughly closed), but persona-cot drops the assistant cell to 76.5% while lifting police_officer to 87.0% — a Δslope_2pt = −0.10 versus the pre-registered prediction of ≥ +0.05.

Main takeaways:

- The +10.5pp wrong-sign gap is dominantly carried by an "incomplete CoT × closing-tag-injection" stratum, not by persona expression. Persona-cot CoT length differs by +213 chars between assistant (mean 1128) and police_officer (mean 915), while generic-cot's length gap is only −24 chars (N=200) — the persona-cot scaffold creates the asymmetry, not the persona system prompt alone. Stratifying the 200 gate questions by CoT completion (

</persona-thinking>self-closure or "correct answer is X" near the end) shrinks the gap from −10.5pp overall to −2.4pp on the both-completed stratum (N=83) and widens it to −14.8pp on the neither-completed stratum (N=54); self-closure rates are 24% (asst) vs 42% (police). This shifts my mechanism from "persona expression / decisiveness" to "max_tokens=256 truncation × the unconditional</persona-thinking>\nAnswer:injection ineval/capability.py": when assistant's longer CoTs run out of budget mid-thought, the appended closing tag pivots toAnswer:with the LAST option discussed salient. - Persona-CoT did not amplify persona-induced ARC-C variation; it reversed the gap (

Δslope_2pt = −0.10vs predicted≥ +0.05, N=200, single seed, temp=0). This is wrong-sign at the gate, not noise-limited indistinguishability — the kill rule fired and the full 11-persona × 1172-question sweep was not run, per plan v3 §6. The 21-question swing on N=200 is well above pure-Bernoulli noise at p≈0.8, so the direction is real on this slice; but Δslope_2pt is itself a difference-of-differences with no within-cell replication, so calling the sweep-level prediction falsified is a stretch — the gate-level prediction is. - Persona-CoT generations did NOT visibly stay in character — sample CoTs from both

assistantandpolice_officerproduced essentially the same neutral analytical reasoning, weakening the mechanism the hypothesis was built on. This is consistent with the cot-axis-tracking finding that on a reasoning model the assistant-axis projection drifts smoothly rather than switching at scaffold boundaries: on this non-thinking-mode 7B instruct, the model is not "switching personas" inside<persona-thinking>either, and the scaffold appears to act as a length/structure prior rather than a persona-conditioning prior. - Per-question swing analysis (21 questions where asst was wrong and police right under persona-cot): asst's wrong predictions concentrate on D (9/21) and B (7/21) — the LAST option discussed when the 256-token budget cuts the CoT short. Among these 21, asst CoT bodies state any "correct answer is X" sentence in 4/21 vs police in 16/21. Within-arm flips (gc→pc) show asst with 23 right→wrong vs 16 wrong→right (net −7) while police has 8 right→wrong vs 21 wrong→right (net +13) — police_officer is doing real work for itself specifically; on assistant the persona-CoT scaffold is a small net regression. This pattern locks in the truncation × tag-injection story over a "persona-CoT lifts everyone" story.

- Letter-extraction asymmetry exists across both arms AND personas-within-arms, but the wrong-sign result survives extraction-equivalent comparison. The gate-stage logprob audit (90 cells) shows real per-(persona, arm) skew: under

no-cot, assistant has 0/15 bare-letter top-5 cells while police_officer has 6/15; underpersona-cot, assistant has 10/15 vs police's 15/15. The earlier "no per-persona bias" claim was wrong — extraction quality differs by persona within arm. However, final-prediction None-counts are equal between personas under persona-cot (3/200 each), so the wrong-sign Δslope is not a pure extraction artifact — it survives extraction-equivalent comparison.

Confidence: LOW — the 2-persona gate cannot decompose "persona-CoT amplifies the assistant end" from "persona-CoT shifts police_officer specifically", AND CoT truncation × the unconditional closing-tag injection is a partial confound (the gap collapses from −10.5pp to −2.4pp on the both-completed stratum) that follow-up must rule out before the wrong-sign direction can be believed to generalize.

Next steps

- Bump

cot_max_tokens=256→512(or longer) OR stratify the headline by CoT-completion using the existinggate/logprob_audit.jsonretrospectively before re-running on the full 11-persona axis — the truncation-mechanism test must precede any inverse-hypothesis follow-up. Cheap (~30 min on 1× H100) and essential to know whether the +10.5pp signal is persona-conditioning or scaffold-budget interaction. - Test the inverse hypothesis (persona-CoT dampens assistant-aligned advantage / lifts other personas) on the full 11-persona axis at K=200 questions per cell — only after the truncation test above clears it. Cheap follow-up (~15 min on 1× H100), confirms whether the wrong-sign direction generalizes past 2 points or is driven by police_officer specifically.

- Harden

_extract_answer_letterPath 1 to bare single-character letter tokens only (or use Path-2 token-id fallback exclusively) before any future CoT-eval experiment — code-review concern #1 fromepm:code-review v1; fix the over-match on word-prefixes likeAh/Alright/Before/carbohydrates. - Re-run with temp>0 and K samples per cell to test the variance-vs-amplification mechanism: if persona-CoT's accuracy bump on police_officer is driven by deterministic decisiveness at temp=0 rather than persona-amplification, sampling should attenuate it.

- Wire

wandb.init()+log_artifact()intoscripts/run_issue150.pyso future re-runs leave a queryable trace (code-review concern #5).

Detailed report

Source issues

This clean result distills:

- #150 — Persona-CoT × Capability Leakage on Qwen2.5-7B-Instruct — gate kill (Δslope_2pt = −0.10, opposite predicted direction).

- PR #178 — Implementation diff (hybrid CoT-then-logprob ARC-C eval + 11-persona-axis validator).

- Plan v3: https://github.com/superkaiba/explore-persona-space/issues/150#issuecomment-4361727393 (final approved spec; v1→v2→v3 trajectory: 40 → 12-17 → 5-7 → ~1 GPU-hr).

Downstream consumers:

- Any future persona-CoT experiment on the 11-persona axis must (a) harden the letter-extractor first (concern #1), (b) bump

cot_max_tokensto 512+ OR stratify by completion to control for truncation, and (c) decide whether to re-run on the inverse hypothesis at K=200 before committing N=1172 questions.

Setup & hyper-parameters

Why this experiment / why these parameters / alternatives considered:

The hypothesis was the natural next step from the cot-axis-tracking analysis (research_log/drafts/2026-04-09_cot_axis_tracking_analysis.md) and #80's 11-persona axis. The cot-axis-tracking draft showed that on Qwen3-32B (thinking-mode reasoning model), the assistant-axis projection drifts smoothly inside <think> (autocorrelations 0.57-0.74 across L16/L32/L48) rather than switching at token boundaries — i.e., the model's representational position evolves over ~20-570 tokens. That is a projection-level finding; this experiment asked the orthogonal behavioral-output-level question: if persona system prompts already produce different ARC-C accuracies on the base instruct model, does giving the model a <persona-thinking> scratchpad to "act in character" before answering make that variation sharper? The two geometries are not the same — smooth axis drift does not entail larger output-level persona spread — but if persona-CoT steered the drift, output spread should grow. Plan v3 collapsed two earlier scopes (v1 used merged c5 LoRA adapters at 12-17 GPU-hr; v2 added Betley + StrongREJECT at 5-7 GPU-hr) down to a single base-model + ARC-C + bootstrap-over-questions design at ~1 GPU-hr, and added a hard 2-persona gate to kill the full 11-persona sweep early on wrong-sign Δslope. Hybrid CoT-then-logprob (generate rationale at temp=0/K=1, then read A/B/C/D logprobs) was chosen over regex extraction or judge-based scoring to keep the headline number a clean argmax-over-letter-tokens.

Model

| Base | Qwen/Qwen2.5-7B-Instruct (HF revision a09a35458c702b33eeacc393d103063234e8bc28) |

| Trainable | none — eval-only on the released instruct model |

Training — N/A (eval-only experiment, no fine-tune in plan v3)

Data

| Source | raw/arc_challenge/test.jsonl (ARC-Challenge canonical, schema {question, choice_labels, choices, correct_answer}) |

| Version / hash | gate uses head-N=200 deterministic slice; full set (NOT RUN) would have been N=1172 |

| Train / val size | 0 / 0 (no training); eval N=200 (gate), planned N=1172 (full, killed) |

| Preprocessing | first-N slice; user turn formatted with letter-tagged choices (A) … (B) … (C) … (D) … |

Eval — scripts/run_issue150.py @ commit 9798de2 (run) / df8f8f2 (results) / cf7f156 (figure)

| Metric definition | per-persona ARC-C accuracy = fraction of 200 gate questions where argmax-letter from the answer-position logprobs matches correct_answer; Δslope_2pt = (asst − police)_persona-cot − (asst − police)_generic-cot |

| Eval dataset + size | ARC-Challenge test, gate head N=200 (full N=1172 NOT RUN, killed at gate) |

| Method | hybrid CoT-then-logprob via batched vLLM: per (persona, question) cell, generate rationale at temp=0/K=1/max_tokens=256, then single forward pass with max_tokens=1, logprobs=20 and argmax over A/B/C/D token IDs (with word-prefix Path-1 fallback — flagged as concern #1) |

| Judge model + prompt | N/A — no judge; pure logprob argmax |

| Samples / temperature | K=1 at temp=0.0, top_p=1.0 (deterministic); CoT max_tokens=256 |

| Significance | bootstrap-over-questions p-value at n=1000 was the planned test for the full stage — NOT computed for the gate (single deterministic 200-question point estimate). Gate decision is pre-registered direction-only: Δslope_2pt ≤ 0 → kill, no p-value. |

Compute

| Hardware | 1× NVIDIA H100 80GB (epm-issue-150 ephemeral pod) |

| Wall time | smoke 6 min + gate 5 min + audit 4 min = 15 min |

| Total GPU-hours | 0.25 used of 1.0 budgeted (~0.75 GPU-hr saved by gate kill) |

Environment

| Python | 3.11 |

| Key libraries | transformers=5.5.0, torch=2.8.0+cu128, vllm=0.11.0, peft=0.18.1, trl=0.29.1 |

| Git commit | 9798de2 (run) / df8f8f2 (results) — full SHA df8f8f2… on branch issue-150 |

| Launch command | nohup uv run python scripts/run_issue150.py --stage smoke && nohup uv run python scripts/run_issue150.py --stage gate (full stage NOT launched per kill rule) |

Plan deviations: two compat hot-fixes were committed inline (commits f491103 and 9798de2, ~10 functional lines total): a 3-line transformers.PreTrainedTokenizerBase.all_special_tokens_extended shim (attribute removed in transformers 5.x but referenced by vLLM 0.11.0's tokenizer cache), and a 7-line vLLM.DisabledTqdm disable=True double-pass shim for tqdm 5.x. Identical patches already live in scripts/run_em_first_marker_transfer_confab.py. These bridge a known dependency-version gap; without them vLLM cannot create an engine on this stack. Not experiment-logic changes.

WandB

N/A — no WandB upload path in current code. This was flagged in epm:code-review v1 concern #5 as a known gap. All four result JSONs (run_result.json, gate/result.json, gate/logprob_audit.json, smoke/result.json) were scp'd off epm-issue-150 and committed to branch issue-150 under eval_results/issue150/. Pod logs at /workspace/explore-persona-space/logs/issue150_{smoke,gate,audit}.log will be lost when the pod is torn down. Wiring wandb.init() + wandb.log_artifact() into scripts/run_issue150.py is in the Next steps list above.

Full data (where the complete raw outputs live)

| Artifact | Location |

|---|---|

| Compiled aggregated results | eval_results/issue150/run_result.json (branch issue-150 @ df8f8f2) |

| Per-stage results | eval_results/issue150/{smoke,gate}/result.json |

| Logprob audit (90 cells, top-5) | eval_results/issue150/gate/logprob_audit.json |

WandB artifact (type eval-results) | N/A (deviation, see WandB section above) |

| Raw generations (CoT text + per-arm pred per question) | embedded in eval_results/issue150/gate/result.json under raw.per_persona.{persona}.raw[] |

| Judge scores | N/A (no judge in this experiment) |

Sample outputs

Cherry-picked for illustration (3 cells from gate, q=0, correct answer = C, both personas predicted C). Full per-question dump in eval_results/issue150/gate/result.json.

Question: "What is the most likely effect of a planet rotating faster after a meteorite impact? (A) Planetary density will decrease (B) Planetary years will become longer (C) Planetary days will become shorter (D) Planetary gravity will become stronger"

Arm = generic-cot, persona = assistant, prediction = C (correct):

- Understanding the Scenario: The planet rotates faster after a meteorite impact. We need to determine the most likely effect of this increase in rotation. … (C) Planetary days will become shorter. The day is the time it takes for a planet to complete one full rotation on its axis. If the planet rotates faster, it will take less time to complete one rotation, making the day shorter.

Arm = persona-cot, persona = assistant, prediction = C (correct):

To determine the most likely effect of the increase in rotation speed of a planet after a meteorite impact, let's analyze each option step by step. (A) Planetary density will decrease. The density of a planet is determined by its mass and volume. An increase in rotation speed does not directly affect the mass or volume of the planet … (C) Planetary days will become shorter. The day length of a planet is determined by its rotation period. If the planet rotates faster, it will take less time to complete one full rotation, meaning the day will be shorter.

Arm = persona-cot, persona = police_officer, prediction = C (correct) — note essentially identical neutral-analytical style to the assistant CoT above:

To determine the most likely effect, let's analyze each option step by step. (A) Planetary density will decrease — density depends on mass and volume; rotation speed does not directly affect either … (C) Planetary days will become shorter — if a planet rotates faster, one full rotation takes less time, so days are shorter. The correct answer is (C).

Persona-CoT collapse observation: under both assistant and police_officer system prompts the persona-CoT scaffold produced the same neutral analytical reasoning — there is no visible "in-character" voice difference on this gate. This was pre-registered as a risk in plan v3 §8 ("scaffold collapse if model writes generic prose inside <persona-thinking>"); the gate sample suggests collapse is real. So whatever drove the +10.5 pp police_officer lift under persona-CoT (87.0% vs assistant 76.5%) is NOT a "model thinking as a police officer" effect — it's something more mechanical, and the per-question audit (Main takeaways above) localizes it to the truncation × closing-tag-injection stratum.

Headline numbers

ARC-Challenge accuracy, N=200 (gate head), single seed (vLLM seed=42), temp=0:

| Persona | no-cot | generic-cot | persona-cot |

|---|---|---|---|

| assistant (cos=+1.00) | 78.5% | 80.0% | 76.5% |

| police_officer (cos=−0.40) | 78.5% | 80.5% | 87.0% |

Pre-registered headline: Δslope_2pt = (asst − police)_persona-cot − (asst − police)_generic-cot = (−0.105) − (−0.005) = −0.100 (predicted ≥ +0.05; observed direction is opposite). Kill rule fired → full N=1172 × 11-persona sweep NOT run.

Standing caveats:

- Single seed (vLLM seed=42), temp=0 deterministic — no within-cell variance estimate. Plan v3 §6 explicitly stated the source of statistical power was bootstrap-over-questions on the full stage; the gate is a single deterministic 200-question point estimate.

- Two-persona gate cannot decompose "persona-CoT amplifies the assistant end" vs "persona-CoT shifts police_officer specifically" — the full 11-persona axis would be needed; the gate does not run it.

- N=200 is the deterministic head of the 1172-question test set, not a random sample; if ARC-C has any ordering by difficulty/topic the gate is sampling from one end of the distribution.

- Single base model (

Qwen2.5-7B-Instruct); no fine-tuned variants tested per plan v3 user-finalized scope. - Letter-extraction asymmetry across arms is real (no-cot 47% bare-letter top-5 picks vs CoT arms 67-83%) AND across personas-within-arms (under

no-cot, asst 0/15 vs police 6/15 bare-letter top-5; underpersona-cot, asst 10/15 vs police 15/15). Final-pred None-counts are equal under persona-cot (3/200 each), so the wrong-sign Δslope is not a pure extraction artifact, but the per-(persona, arm) extraction skew remains a real noise source. - CoT truncation × unconditional

</persona-thinking>\nAnswer:injection is a partial confound: stratifying by completion shrinks the gap from −10.5pp overall to −2.4pp on both-completed (N=83) and widens it to −14.8pp on neither-completed (N=54). This must be ruled out (viacot_max_tokensbump or completion-stratified headline) before the wrong-sign direction is believed to generalize. - Two compat hot-fixes (

transformers.PreTrainedTokenizerBase.all_special_tokens_extendedandvLLM.DisabledTqdm) were applied to the eval stack to bridge a vllm 0.11.0 + transformers 5.5.0 dependency gap; identical patches already live in another script in this repo, so this is a known-environment characteristic rather than a per-experiment regression. - No WandB upload (concern #5); reproducibility relies on the four committed JSONs on branch

issue-150plus pod-side logs that will be lost at pod TTL. run_result.jsondoes not carry top-levelgit_commit/timestampfields — commit pinning here relies on the figure sidecar.meta.jsonand the branch-pinnedeval_results/issue150/JSONs atdf8f8f2.

Artifacts

| Type | Path / URL |

|---|---|

| Eval orchestrator script | scripts/run_issue150.py @ 9798de2 |

| Logprob audit script | scripts/audit_issue150_logprobs.py @ 46385e6 |

| Hero-figure plot script | scripts/plot_issue150_gate.py @ cf7f156 |

| Compiled run record | eval_results/issue150/run_result.json |

| Gate stage results (raw rows + CoT text) | eval_results/issue150/gate/result.json |

| Gate logprob audit (90 cells, top-5) | eval_results/issue150/gate/logprob_audit.json |

| Smoke stage results | eval_results/issue150/smoke/result.json |

| Figure (PNG) | figures/issue150/gate_arc_accuracy_by_cot.png |

| Figure (PDF) | figures/issue150/gate_arc_accuracy_by_cot.pdf |

| Figure metadata sidecar | figures/issue150/gate_arc_accuracy_by_cot.meta.json (commit cf7f156) |

| New eval module — hybrid CoT-then-logprob | src/explore_persona_space/eval/capability.py::evaluate_capability_cot_logprob |

| New prompting scaffolds | src/explore_persona_space/eval/prompting.py::{NO_COT,GENERIC_COT,PERSONA_COT} |

| HF Hub model / adapter | N/A — eval-only on released Qwen/Qwen2.5-7B-Instruct |

| Branch / draft PR | branch issue-150 @ cf7f156; PR #178 |

Loading…